DISCRIMINATORY LENDING PRACTICES &

BIASED ALGORITHMS

Discriminatory Lending Lawsuits

- Discriminatory Lending practices have made it more difficult for certain individuals to qualify for mortgages and small-business loans

- Lending discrimination occurs when lenders base credit decisions on factors other than an applicant’s creditworthiness

- Biased Algorithms, programmed by banks, financial institutions and other corporate entities, may contribute to unfair lending practices

- Consumer Protection Laws have been established to forbid unfair loan practices

Compiling consumer data and using sophisticated AI-powered lending programs creates an opportunity to transform how companies allocate credit and risk, and ultimately create a more transparent and fair credit system. However, when financial institutions program a bias into their loan algorithm it can worsen an already existing bias, leading to more lending discrimination and unfair lending practices.

The Lyon Firm is investigating consumer protection violations that may involve biased lending algorithms and other discriminatory lending practices. Any corporate act of lending discrimination based on race, color, religion, sex, national origin, handicap, familial status, age, or amount of public assistance income may be grounds for legal action, and a potential consumer protection lawsuit.

The following federal laws are meant to offer protection against lending discrimination:

- The Fair Housing Act (FHA)

- The Equal Credit Opportunity Act (ECOA)

- The Community Reinvestment Act (CRA)

But even with existing laws that forbid such practices, lending discrimination is a lot more common than many think. In fact, with lenders able to program risk analysis software with potentially biased algorithms, unfair lending may be as pervasive as ever before.

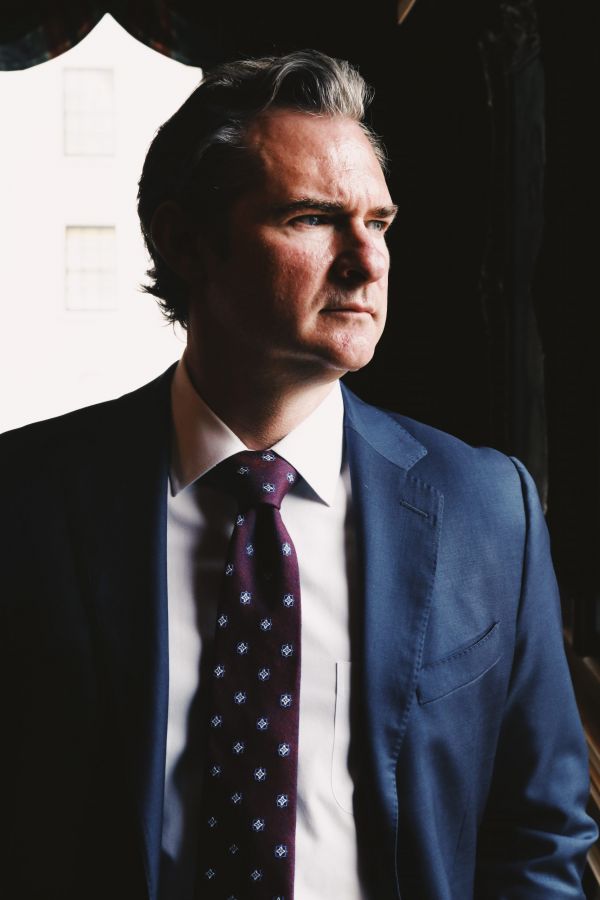

Joe Lyon is an experienced Consumer Protection Attorney, reviewing Digital Discrimination, Biased Algorithms, and Unfair Lending cases for plaintiffs nationwide.

Types of Discriminatory Lending

Consumer advocates have suggested some banks and financial institutions have programmed their lending algorithms to be biased, and may violate several state and federal states. By law, lenders may not discriminate or base credit decisions based on the following:

- Race or Color: Studies show that both online and face-to-face mortgage lenders charge higher interest rates to borrowers of color. Lenders are prohibited from asking about a person’s race or ethnicity on a loan application, and may only be disclosed voluntarily. Race cannot be used in any way by a lender to make a credit decision.

- Religion: It is illegal for lenders to discourage applicants from applying for credit or reject a loan application because of a religious affiliation.

- National origin: Lenders cannot inquire about a person’s ancestry, the origin of their surnames or discriminate against non-English speakers. Creditors do have the right to ask about immigration status and whether an applicant has the right to remain in the country long enough to repay any outstanding debts.

- Sex: There have been many instances of gender discrimination where loan officers rate a female’s credit lower than a male’s, with all other things equal, including their occupation. Many women have also had credit denied due to income that includes part-time jobs, alimony or child support, all historically female-centric. Many women have reported loan officers asking about their husband’s job, but not vice versa. Pregnant women have also seen a fair amount of discrimination due to the possibility of maternity leave.

- Marital status: Lenders may ask specifically if an applicant is married, single, or separated, but not if they are divorced or widowed. Lenders, however, can require spousal information if they will be permitted to use, or be liable for, an account, or if the applicant lives in a community property state.

- Age: Lenders cannot discriminate against borrowers solely based on age, but can use age to determine the possibility of impending retirement.

- Source of income: Lenders cannot discriminate against applicants because of income derived from public assistance, child support, alimony, Social Security, part-time employment, pensions or annuities.

- Sexual orientation: There are currently no federal laws prohibiting credit discrimination based on sexual orientation, though some state laws exist.

Mortgage Lending Discrimination

With many protections in place to prohibit such bias, Black and Hispanic Americans are still denied mortgages, or offered loans at a higher rate than their white counterparts. Data collected from the Home Mortgage Disclosure Act (HMDA) and compiled by the Consumer Financial Protection Bureau (CFPB) shows not only a high rate of discrimination on whether to accept an application, but many borrowers of color wind up with a higher-priced loan compared to white applicants.

This modern-day redlining has been documented in almost every major metro area in America. No matter a loan applicant’s location, many describe disproportionate denials and unfair loan practices.

Denying loans based on race, color, sex, religion or national origin, unfair mortgage lending discrimination is one of the most common forms of lending bias. When a bank or other lender receives a mortgage application and bases their decision on factors other than creditworthiness, the victims may file a claim and contact an attorney to review.

Small Business Loan Bias

Digital discrimination goes beyond mortgage lending. A report from The Business Journals found White neighborhoods receive about twice as much per person in small-business loans compared with Black neighborhoods.

Some concerning credit reports note that in recent years the number of loans made to Black-owned businesses have decreased dramatically, despite positive trends in overall loans awarded. Some consumer protection firms have investigated, looking for evidence of obvious discrimination in small-business lending.

A report by the New York Times showed that 75 percent of the government’s initial round of Paycheck Protection Program loans went to businesses in White-majority areas. Citi, Bank of America, JPMorgan, and Wells Fargo—America’s four largest banks—made 91 percent fewer small-business loans to Black-owned businesses in 2019 than in 2007. Whether this is due to relatively new algorithmic bias or some other factors is not entirely clear, but is certainly cause for suspecting unfair lending practices.

Clear Signs of Lending Discrimination

Some obvious warning signs of discriminatory lending loan practices may include:

- You are denied credit that you qualify for

- You are approved for a loan but at a higher rate than what you applied for (when you should qualify for a lower rate)

- A lender advises you not to apply for credit without a good reason

- You are treated differently over the phone than in person

- You hear discriminatory language

- An online lending program asks questions about race, sex, or national origin

What is Digital Discrimination?

Digital discrimination is a relatively new form of bias that describes a software that treats applications differently based on the personal data that is processed by an algorithm. Digital discrimination often mirrors an existing thread of discrimination by inheriting the biases of prior decision-makers, or by those who programmed the algorithm.

Consumer protection attorneys are demanding more transparency and more liability for companies who negligently serve consumers with biased algorithmic risk analysis software. The Lyon Firm feels very strongly that companies have a duty to ensure their systems are free of bias and discrimination.

This is a complex area of law, and while it may be difficult to prove companies willfully create biased algorithms and embrace digital discrimination, there may be enough evidence to prove their negligence in overseeing the shortfalls of technology like automated decision-making.

How are Lending Algorithms Biased?

Acknowledging the existence and causes of bias in lending software is a logical first step in solving the problem. Bias in algorithms may be born of incomplete training data, the reliance on flawed information, or historical inequalities.

Biased algorithms may not always be an intentional act of discrimination, or some malicious method of holding certain minority groups down, but rather a lack of data, or simply a poorly programmed lending tool. Computer-generated pricing systems may discriminate against minority borrowers because they tend to shop less than white borrowers, according to a recent study from the University of California, Berkeley.

Either way, lenders have a responsibility to provide all clients with the same opportunity for loans.

Why Should I Hire The Lyon Firm?

The experienced attorneys at The Lyon Firm have the knowledge and resources to tackle novel legal claims such as those involving biased algorithms and digital discrimination.

Discriminatory lending is a complex area of law that requires the attention of an experienced lawyer. When compounded with additional issues such as biased algorithms and inherently biased systems, it can become even more complex. Allow The Lyon Firm to investigate your unique case, and fight on your behalf following an overt act of discrimination.

ABOUT THE LYON FIRM

Joseph Lyon has 17 years of experience representing individuals in complex litigation matters. He has represented individuals in every state against many of the largest companies in the world.

The Firm focuses on single-event civil cases and class actions involving corporate neglect & fraud, toxic exposure, product defects & recalls, medical malpractice, and invasion of privacy.

NO COST UNLESS WE WIN

The Firm offers contingency fees, advancing all costs of the litigation, and accepting the full financial risk, allowing our clients full access to the legal system while reducing the financial stress while they focus on their healthcare and financial needs.

CONTACT THE LYON FIRM TODAY

Why are Class Actions Important?

Without class actions, large corporate defendants would be able to cause small amounts of harm over a large group of individuals without any risk of monetary penalty.

Class actions allow a plaintiff who would ordinarily not have access to the Court bring a collective action. Class actions enforce regulatory statutes & common law caused of action that keep companies honest and hold them accountable when they deceive the public or fall below acceptable industry standards.

Discriminatory Lending FAQs

There are federal laws that specifically prohibit lending discrimination, and lenders are not allowed to engage in the following:

- Base the loan on age or race

- Steer applicants away from applying for a loan based on protected class

- Charge higher interest rates or fees based on protected class

- Ask applicants whether they are divorced or widowed

- Ask if an applicant is married (if outside a community property state)

- Ask about an applicant’s spouse

- Ask about plans for starting or raising a family

- Ask an applicant if they receive alimony or child support (unless the income is needed to qualify for the loan)

Yes, there are several.

The Fair Housing Act (FHA) and the Equal Credit Opportunity Act (ECOA) protect consumers by prohibiting unfair and discriminatory practices. The Home Ownership and Equity Protection Act (HOEPA) protects consumers from excessive fees and interest rates.

The Consumer Credit Protection Act (CCPA) and The Truth in Lending Act (TILA) help protect consumers from abusive loan practices. These laws place restrictions on banks, credit card issuers, and debt collectors.

At least 25 states have additional anti-predatory lending laws.

- You are encouraged to research as much as possible before applying for a mortgage or small business loan. Know what interest rates should be for someone with your credit score, assets and income, and compare that to what you are offered.

- One of the best deterrents to lending discrimination is to ask a lot of questions. If the quoted rate or fees seem high, a loan officer should provide detailed answers about the rates and costs.

- Shop around in person and online. Don’t accept the first loan you’re quoted. Experts suggest looking at three to five different lenders to ensure you get the best deal.

- And the number one rule in credit terms is, “If you don’t understand it, don’t sign it.”

Common examples of algorithmic bias include:

- Bias in online loan application software

- Bias in online recruitment tools

- Bias in health insurance quotes

- Bias in criminal justice algorithms

Some lending discrimination is rather obvious, like when a lender denies a loan for a woman on maternity leave until she returns to work, or if a rate is inexplicably higher for a person of color than a white applicant.

Other forms of discrimination are not as obvious, such as biased algorithms in lending software, and a full expert investigation may be necessary. But in the end, all unfair lending practices are treated the same in the court of law.

If you or a loved one have been affected by discriminatory lending practices, contact an attorney to review your case. If you suspect your lender has a biased algorithm and wonder if it may have affected your loan application, call The Lyon Firm for a free and confidential consultation at (513) 540-3689.

-

-

Answer a few general questions.

-

A member of our legal team will review your case.

-

We will determine, together with you, what makes sense for the next step for you and your family to take.

-